Mission: Implausible 2.0 — Autonomous AI Agents Fails

You can’t throw a rock without hitting an autonomous “AI Agent”. Citrini Research predicts that in just a few years, AI Agents will be the invisible grease in our daily lives, permanently altering the macroeconomic landscape. While it brings up a lot of valid points, it paints the picture that AI Agents seamlessly do their best work. As these AI agents move from MVPs to the real world, are they ready – or are they ready to fail?

In AI diligence, I see how far off the mark that execution is. A big part of my supporting investors and accelerators is product testing, to make sure that the product actually delivers on what it promised, is integrated into customer workflows, and solves a real pain point at venture-scale, with the help of AI.

This month, I was swimming against the current trying to navigate the fragmented world of real estate, determined to find a new apartment to rent, when everything was “powered by AI.”

Scenario 1: When an AI Agent is an Overengineered Solution

Not surprisingly, many property management companies and landlords are turning to AI Agents to schedule (self-) guided tours – which are struggling to navigate dates and times! Wouldn’t it be easier and better to just text/email me the link to a calendar widget?

This is also a trend in healthcare I pointed out at LATechWeek (YouTube recording here) that clinics are jumping on the bandwagon of AI Agents to help patients schedule appointments. AI Agents that would hang up on non-native English speakers or when a patient was “difficult to understand”, reducing their access to care!

Jaiden Dittfach makes me feel seen, as she describes her super frustrating experiences navigating overengineered AI solutions in her everyday life in this YouTube video.

Scenario 2: When AI Agents are Not Tied into Workflows and Pose Risks

As these autonomous AI Agents reminded me of my scheduled tours and prompted me to reconsider a property, they became a nuisance – when it was a property I already toured and let the landlord know that it’s not a fit.

AI Agents I’ve seen are typically (and unfortunately) not tied into workflows. As a result, I was occasionally ghosted or asked to reschedule the tour, because the property manager was not actually available. There I was, trying the front door of a large apartment complex, trying to get in. This could have posed a safety risk if I were trying to tour a single-family home of an occupied rental!

Madeline Wells shares her experience navigating the rental search in the Bay Area, reminds us that AI used in screening applications may discriminate!

Scenario 3: When Autonomous AI Agents Fail and Cause Harm

Because autonomous AI Agents are sold as having no humans-in-the-loop, how they handle scenarios they don’t know the answer to – or when they inevitably error out – is not without consequences to customers.

One of the rental properties I encountered in my apartment search was using an AI system to screen applicants; I was its second applicant. I was naive to expect that things would just work. As an independent contractor who banks at a credit union, and takes meetings throughout the day – my application, which I paid $65 for – was cancelled twice by AI (!). First, when I took “too long” – a mere 25 minutes to reply, because I was in a meeting and wasn’t checking my phone/email – to its prompt that asked me to re-upload my bank statements! And then again, because it didn’t accept those bank statements as valid (!).

That’s because its terms and conditions promise to analyze W2s and bank statements from the top 10 major banks. Wouldn’t it have been better, if during screening, when a decision of approved/denied can’t be reached, the property management company (or similar) is looped in? Double, if bank statements don’t look like the training data. Better yet, prompt the user for which bank they use for an extra layer of verification. And no cancelling applications applicants paid for!

Both times, the property manager had to step in to reactivate the application, after receiving my frantic emails with screenshots of AI interactions. (While I ultimately got approved, I ended up renting with another place whose AI was better behaved.)

Scenario 4: Autonomous AI Agents as “Vibe-Coded AI Slop”

In the age of “AI slop” for content, we rarely talk about the “AI slop” of vibe-code.

While I recommend that non-technical founders use interactive wireframes for their MVP, I completely understand why vibe-coding one is attractive. This also prepares the founder for interviewing prospective collaborators for the next phase of the product.

I’ll also add that the vibe code that’s generated by the LLMs is getting better every 6 months, as you’d expect! What 10 years ago took me weeks (if not months) to launch a “hello world” Python API in a Docker container to make predictions of a simple regression model, now takes an hour, if you know what you’re doing and can fix the handful of vibe-coded mistakes.

The more standard/templatized the implementation you’re vibe coding, and the smaller the cost when the answer is wrong, the less issues I’d expect in getting the code to actually run. That’s because this implementation should show up more in the training data.

As we all know, LLMs are trained on publicly-available content, such as books and code repositories. We would expect that training data that incorporates the vast library of highly regarded books out of copyright is of great quality (as long as it’s relevant to the questions at hand).

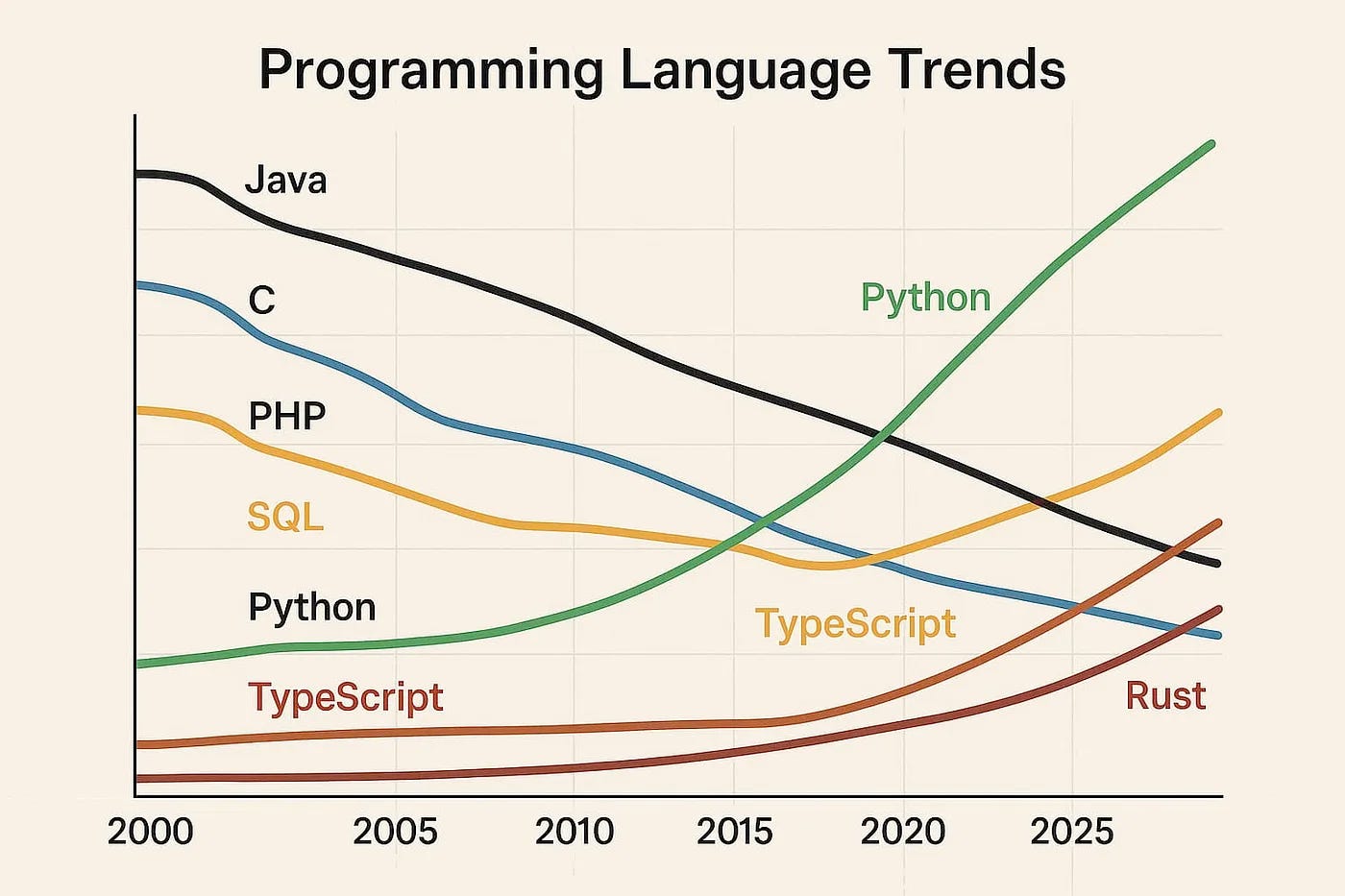

Training data for software development is of lower code quality, because of what’s publicly available! In 2023, GitHub had over 420 million repositories, with 28 million public. While that seems like a big number, let’s take a step back and think about who makes their code public? Typically, it’s individuals working on passion projects and large open-source projects, such as Apache Software Foundation. Most companies are not sharing their code publicly; that’s one of their moats. As a result, I would expect more mistakes and issues in publicly available code! And software language preferences change over time; one example is shown in the figure below from this Medium article:

LLMs may or may not account for, along with other issues mentioned here (!). We saw this in one of my prior Substacks, where the LLMs suggested software packages that were deprecated for processing images of tax forms.

It’s also understandable that we’d all like to automate relatively simple, routine, administrative tasks. And, as I point out in another previous Substack, performance metrics for AI Agents trying to automate these tasks will look deceptively good, because they’ll incorrectly be compared to the status quo of human scheduling without calendar widgets!

What to Consider Discussing in Diligence